1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

| import torch

import torch.nn as nn

import torch.optim as optim

import torchvision.transforms as transforms

from torch.utils.data import DataLoader

from torchvision.datasets import MNIST

import matplotlib.pyplot as plt

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

class CustomCNN(nn.Module):

def __init__(self):

super(CustomCNN, self).__init__()

self.conv1 = nn.Conv2d(1, 32, kernel_size=3, stride=1, padding=1)

self.relu1 = nn.ReLU()

self.pool1 = nn.MaxPool2d(kernel_size=2, stride=2)

self.conv2 = nn.Conv2d(32, 64, kernel_size=3, stride=1, padding=1)

self.relu2 = nn.ReLU()

self.pool2 = nn.MaxPool2d(kernel_size=2, stride=2)

self.conv3 = nn.Conv2d(64, 128, kernel_size=3, stride=1, padding=1)

self.relu3 = nn.ReLU()

self.pool3 = nn.MaxPool2d(kernel_size=2, stride=2)

self.flatten = nn.Flatten()

self.fc1 = nn.Linear(128 * 3 * 3, 512)

self.relu4 = nn.ReLU()

self.dropout = nn.Dropout(0.5)

self.fc2 = nn.Linear(512, 10)

def forward(self, x):

x = self.pool1(self.relu1(self.conv1(x)))

x = self.pool2(self.relu2(self.conv2(x)))

x = self.pool3(self.relu3(self.conv3(x)))

x = self.flatten(x)

x = self.relu4(self.fc1(x))

x = self.dropout(x)

x = self.fc2(x)

return x

batch_size = 64

learning_rate = 0.001

epochs = 10

transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.5,), (0.5,))])

train_dataset = MNIST(root='./data', train=True, download=True, transform=transform)

test_dataset = MNIST(root='./data', train=False, download=True, transform=transform)

train_loader = DataLoader(dataset=train_dataset, batch_size=batch_size, shuffle=True)

test_loader = DataLoader(dataset=test_dataset, batch_size=batch_size, shuffle=False)

model = CustomCNN().to(device)

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=learning_rate)

train_loss_values = []

test_loss_values = []

for epoch in range(epochs):

model.train()

total_train_loss = 0.0

for i, (images, labels) in enumerate(train_loader):

images, labels = images.to(device), labels.to(device)

optimizer.zero_grad()

outputs = model(images)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

total_train_loss += loss.item()

average_train_loss = total_train_loss / len(train_loader)

train_loss_values.append(average_train_loss)

model.eval()

total_test_loss = 0.0

with torch.no_grad():

for images, labels in test_loader:

images, labels = images.to(device), labels.to(device)

outputs = model(images)

loss = criterion(outputs, labels)

total_test_loss += loss.item()

average_test_loss = total_test_loss / len(test_loader)

test_loss_values.append(average_test_loss)

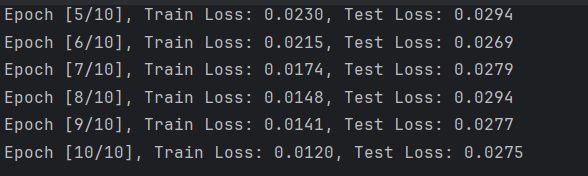

print('Epoch [{}/{}], Train Loss: {:.4f}, Test Loss: {:.4f}'.format(epoch + 1, epochs, average_train_loss, average_test_loss))

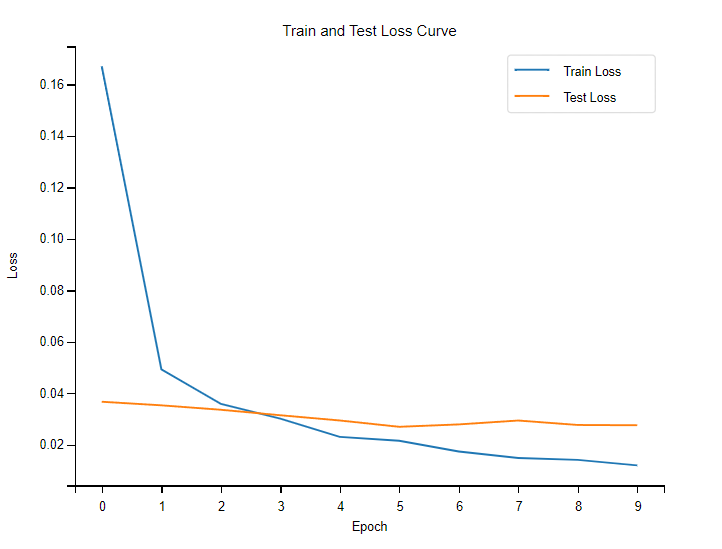

plt.plot(train_loss_values, label='Train Loss')

plt.plot(test_loss_values, label='Test Loss')

plt.xlabel('Epoch')

plt.ylabel('Loss')

plt.title('Train and Test Loss Curve')

plt.legend()

plt.show()

torch.save(model.state_dict(), 'custom_cnn_model.pth')

|